AI as the World's Greatest Hacker – and What That Means for Me

Published Date:

- April 29, 2026

What happens after not only all vulnerabilities have been found – but then almost all of them have disappeared?

Two weeks ago, Anthropic made headlines with “Mythos” – a model that finds vulnerabilities like no human before it. Last week I wrote about how AI is becoming the better penetration tester.

But the more interesting question comes now: What happens when these systems don’t just find things, but systematically expose everything? And what happens after that?

We are probably at the beginning of a phase in which software worldwide will be “X-rayed.” Not selectively – but completely. Machines find bugs faster, at greater scale, and more systematically than any human community ever could.

The consequence is radical: the number of technical vulnerabilities will drop massively over the coming years.

This could, however, have significant side effects – here’s my take:

Some will be hit very hard:

Companies like NSO (Pegasus) will lose their business model. If there are no more 0-day exploits because AI finds the vast majority of these gaps in short order – and ideally makes them patchable – things will get very difficult for Pegasus. 0-days will become rarer, more expensive, and shorter-lived.

What happens next is almost deterministic: global IT will harden. Systems will become more robust. Many of today’s known attack paths will disappear.

In the end, two categories primarily remain:

- Vulnerabilities withheld by nation-states

- …and the human being

From here it gets uncomfortable:

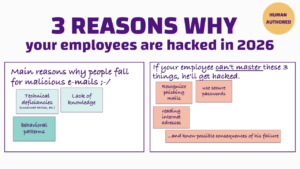

While technical attack surfaces shrink, another category is exploding: social engineering. What other option do the “bad guys” have?

The data is clear:

- 47% [1] or 67% [2] of all breaches begin with phishing or social engineering (depending on the study)

- 3.4 billion phishing emails are sent daily, over 80% already AI-generated [3]

- AI phishing campaigns are up to 42% more successful than classical attacks [4]

- 63% of security experts see AI social engineering as the top threat in 2026 [5]

And the quality is changing fundamentally – a process we can already observe today: no more typos, no more “Nigerian prince.” Instead, perfectly personalized, multi-stage attacks across email, SMS, WhatsApp, Teams, calls, and deepfake video [6].

Let’s be blunt:

Technology is becoming more secure – but humans remain vulnerable.

A second development compounds this: AI is not only a technical hacker, but increasingly the better social engineer too. The Harvard study “Evaluating Large Language Models’ Capability to Launch Fully Automated Spear Phishing Campaigns” demonstrates exactly this: AI can create more convincing and more scalable spear-phishing campaigns than humans – fully automated and significantly cheaper.

AI has been the better hacker since at least 2024:

This shifts the playing field.

The old pattern:

- Developing exploits: expensive, slow, selective

- Social engineering: manual, limited scalability

Today:

- Exploits are automatically discoverable through AI (and will soon be patched faster)

- Social engineering is automated and cheaply scalable

The result: attackers go where the returns are still good. And the highest returns lie with the human.

My hypothesis: The next two years belong to the “good guys.” We will harden systems, close gaps, stabilize infrastructure.

At the same time, attackers are already shifting their focus – away from technology, toward the user. Because when the technology is airtight, only one direct route remains: manipulation.

The target becomes emotion rather than technical exploit. Trust rather than buffer overflow.

Even I, as an expert, recently fell victim to an extremely well-crafted phishing campaign. The risk of this happening more frequently is real.

Conclusion: We are moving into a world where software is more secure than ever before – but where humans are more squarely in the crosshairs than ever before.

Cybersecurity has long been a technical problem. Now it is definitively becoming a behavioral problem.

And that is exactly where we need to start: we must begin changing behavior – not just protecting systems.

At CYBERDISE, we are therefore deliberately evolving: from classical awareness training toward systems that measurably improve human behavior.

Sources:

[1] IBM Cost of a Data Breach Report 2024 https://cyberdise-awareness.com/wp-content/uploads/2026/04/Cost-of-a-Data-Breach-Report-2024.pdf