AI: The World’s Greatest Pentester — And Why It Has to Be This Way

Published Date:

- April 28, 2026

What happens when software is no longer attacked only by humans, but by synthetic actors that think differently than we do?

Claude developer Anthropic made headlines last week with the internal release of a new model called Mythos. It is said to be exceptionally good at finding bugs and vulnerabilities in software. Due to these capabilities, Anthropic is refraining from a public release for now and instead aims to work with large tech companies and governments to prevent misuse.

It remains unclear how realistic and actually exploitable many of these vulnerabilities are… at least for humans.

Anthropic itself classified a 16-year-old FFmpeg vulnerability as non-critical and considered a working exploit difficult. Potential exploits found in the Linux kernel could not be leveraged due to its layered security mechanisms, and some appear to have already been patched.

Anthropic also admits that the thousands of reported issues are not fully verified, but rather based on extrapolation. The basis: around 90% agreement in 198 manually reviewed cases. [1]

I am very grateful that many of these discovered bugs are unlikely to cause harm to users—whether because patches already exist, layered security concepts mitigate them at higher levels, or other safeguards are in place.

Nevertheless, these vulnerabilities must be fixed. Why? Because with AI, we have introduced an intelligent actor into our world that does not think like a human. Alongside human logic, there is now (brilliant) AI logic. We already saw this over 10 years ago with Move 37 from AlphaGo.[2]

Recommended by LinkedIn

Lies-in-the-Loop: When AI Safety Controls Become the Exploit

COE Security LLC

AI's Hidden Vulnerability: The Rising Threat of Prompt

Vivienne Neale

AI Is Quietly Rewriting the Rules of Cyber Attacks

Pankaj Suthar

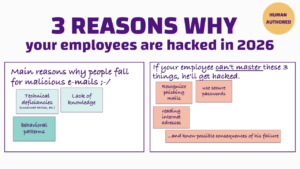

Our IT systems no longer need to be hardened “only” against human hackers, but also against AI hackers. And AI hackers are far more capable than we are of turning even the most complex and convoluted vulnerabilities into working exploits.

Conclusion: The real risk does not lie in the individual vulnerability, but in the new way of thinking that can exploit it. Many of these bugs may seem harmless today—but that is a human assessment. AI may arrive at a very different conclusion.

Security used to be a game against human creativity. Now it is a game against something that systematically scales that creativity.

This month, we will be conducting an in-depth penetration test for our cybersecurity awareness platform and the on-premises versions. I will definitely ask the team to perform an in-depth AI penetration test.